Keeping track of Google’s algorithm updates is a full-time job—especially considering the fact that Google doesn’t always explain why frequent fluctuations in the SERP (Search Engine Results Page) occur. Mostly, these updates occur in the name of increasing relevance in the SERP. They benefit sites that produce quality, fresh, relevant content. The song remains the same.

But what happens when your site traffic takes a hit? Today, we’re going to discuss a couple big(ish) Google algorithm updates that occurred in the past couple weeks, one “official” and one unofficial. We’re also going to talk about the rhetoric that you should be using to explain SEO fluctuations that may or may not have occurred on your site as a result of these changes. Lastly, if you’re an advertiser, we’ll discuss how these updates affect you.

Check out the top Google algorithm updates of 2021 here!

BERT (Bidirectional Encoder Representations from Transformers)

BERT is, in the words of the illustrious Ron Burgundy, kind of a big deal. BERT stands for Bidirectional Encoder Representations from Transformers. If you don’t remember that, it will probably have minimal impact on your life. What you should remember, however, is this:

“[BERT] represent[s] the biggest leap forward in the past five years, and one of the biggest leaps forward in the history of Search.”

That’s Google, talking about BERT’s impact on the organic search landscape. BERT began rolling out the week of October 21, 2019 for English-language queries.

What is BERT?

Basically, BERT allows Google to better understand words in the context of search queries.

Now, this is nothing novel. Queries are getting more conversational by the day as users increasingly treat their devices like companions, and as voice search continues to proliferate. The Hummingbird update, in 2013, was Google famous first response to this trend. Where BERT differs, though, is how it goes about processing and understanding language.

How does BERT work?

BERT is a neural network-based technique for natural language processing pre-training. To break that down in human-speak: “neural network” means “pattern recognition.” Natural Language Processing (NLP) means “a system that helps computers understand how human beings communicate.” So, if we combine the two, BERT is a system by which Google’s algorithm uses pattern recognition to better understand how human beings communicate so that it can return more relevant results for users.

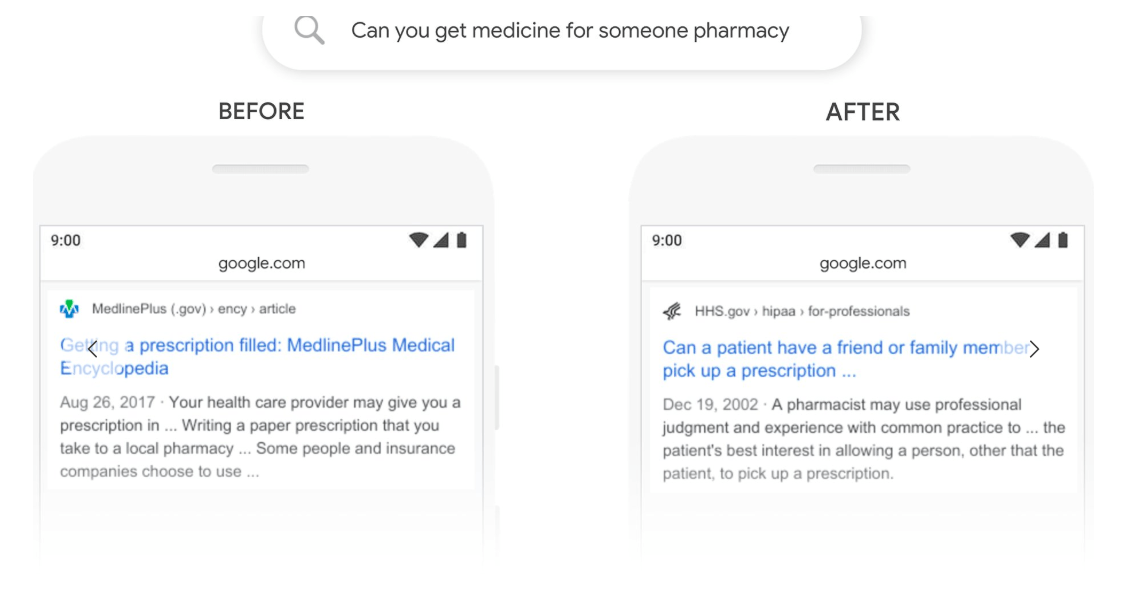

What does this look like in practice? Well, let’s say your beau has a proclivity for antibiotics but is too unwell to pick them up himself:

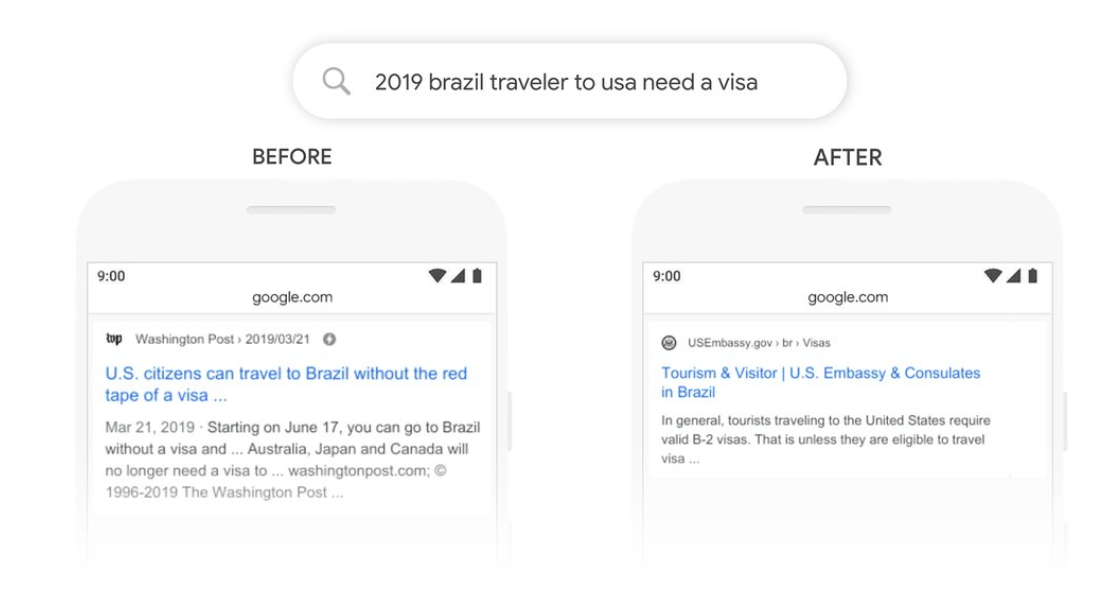

Google is now better able to understand the subtle nuance that “can you get medicine for someone pharmacy” implies that a person wants to pick up a prescription for someone else, not for themselves. Ditto with “2019 brazil traveler to usa need a visa”:

BERT allows Google’s algorithm to better understand that “to” and “need,” contextually, imply that the searcher … well, needs a visa to travel to the United States! It seems straightforward to us; to a machine, it’s a subtle understanding that’s not easily coaxed.

How should I react to BERT?

Here’s a strange sort of kicker that might fly in the face of your instincts: you don’t actually have to do anything to “respond” or “prepare for” BERT.

The reason is this: As with all algorithm updates, Google did not create BERT to penalize certain sites. Nor did it create it to benefit others. If your site got hit by BERT, it’s likely that you were accruing traffic from a group of specific queries that shouldn’t have been getting you traffic, because they weren’t the best fit for the query. What’s more, that traffic was probably of pretty low quality. Take the first example: MedicinePlus might miss out on some sessions via the query “can you get medicine for someone pharmacy,” but the users who made that query were looking, at any rate, for information, not for a commercial solution.

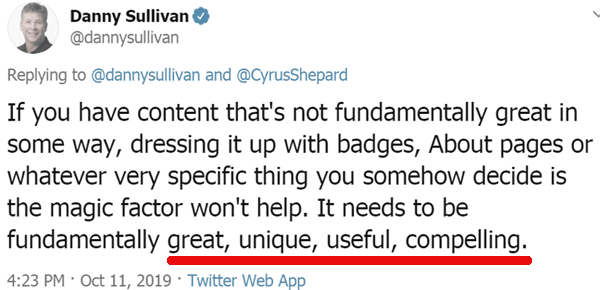

If you want to optimize for BERT, quite simply, focus on creating more relevant and useful content, and creating more of it. And if you lost traffic from BERT, take solace in the fact that you benefiting much from that traffic anyway.

Possum 2.0? The November 6 local algorithm update

Now, let’s talk about everyone’s favorite flavor of algorithm update: the “unconfirmed update.”

This is the one where there is a lot of “chatter” in SEO forums and on Twitter about an update that is suspected to have taken place, but Google hasn’t confirmed whether it did or did not. It’s very exciting, and very scary, because nobody knows for sure what’s going on, and speculation runs rampant.

Let’s talk about what we know about the local algorithm update that took place last week, how it may or may not be affecting your local SEO, and how you should react to it.

What was the November 6 local algorithm update?

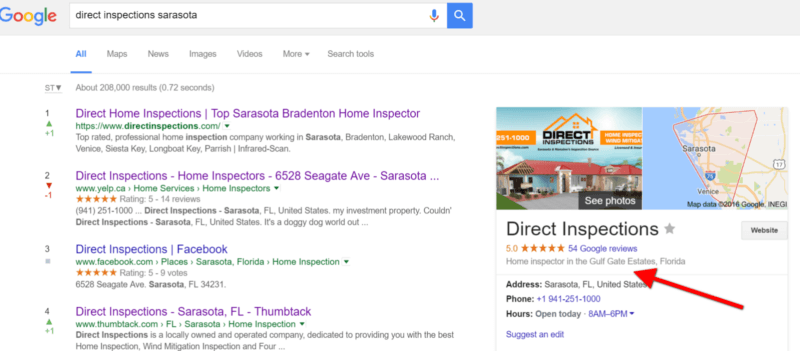

First of all, what in the world is a “local” algorithm update? Well, simply put: It’s an algorithm update aimed at delivering more relevant results for local queries. The most famous example of this was Possum, which rolled out in 2016, and improved search equanimity by giving businesses just outside city limits their fair share of the local search pie (if they were, in fact, closer to the searcher):

Since then, local search, at least on the organic side of the coin, has remained pretty stagnant. Until now! The update that rolled out last week echoes the intentions of Possum: It’s all about proximity. The sites that saw the biggest losses in 2016 were those that weren’t actually in the zip code of a user at the time the query was made.

The results after this past week’s local algorithm update again have to do with proximity: Google My Business listings that were closer to the searcher won out, while those that were farther lost traction.

How do I optimize for a local algorithm update?

This one’s a little more complex. The result of algorithm updates that focus on proximity, in the past, has been in increase in spam. It’s an unwanted byproduct that Google has not been able to clean up this time around.

This, however, is most likely not going to be the reason you lose traffic from this update—if you lose traffic at all. If you see an influx of local business listings that weren’t there prior to the update and that have no reviews and suspect names, report them using Google’s Business Redressal Complaint Form.

Otherwise, you can safely assume that if you lost traffic from this update, your local listing wasn’t actually the closest listing to the users in your location who were making the majority of the queries.

Lastly, the best way to “optimize” for this local update may not be via organic search. Marketers active on Google Ads and/or Facebook Ads can compensate for lost local traffic with precise geotargeting, more aggressive bidding, local service ads, and locally inspired ad copy and creative.

What we talk about when we talk about algorithm updates…

If there’s a theme to be picked up on in here, it’s this: Don’t panic about either of these algorithm updates or, really, any that occur in the future. For the most part, Google’s algorithm updates increase relevance and deliver better search results.

That’s not to say that all sites that lose traffic from an algorithm update “deserve” to do so. But it would be a mistake to think that you can react a certain way to neutralize the effects of a given update. In organic search (like in life!), it’s better to be proactive than reactive. If you can focus on creating relevant, fresh content that meets the needs of your user, and if you can shore up any technical holes that might be bogging down performance, you’ll win more often than you lose.