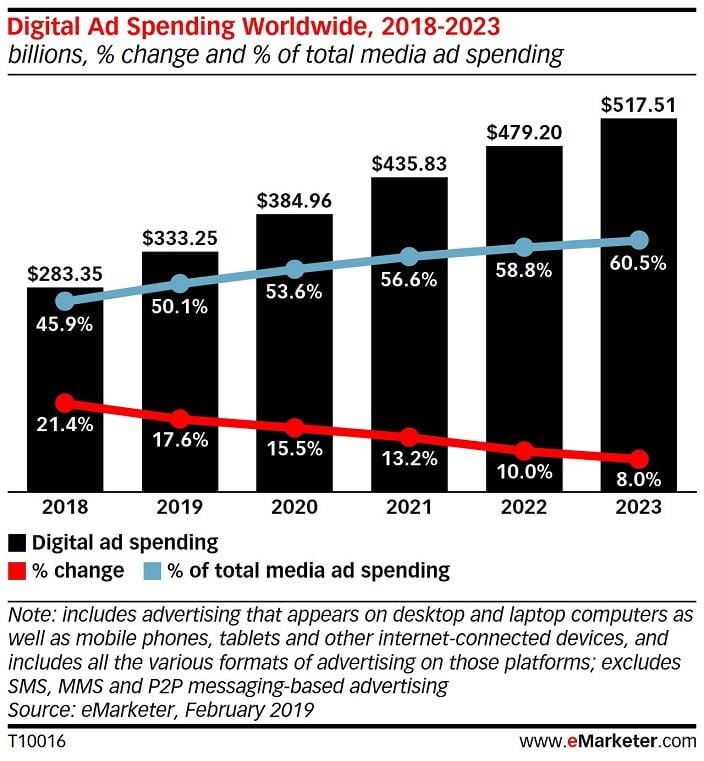

Online advertising is expensive. And spend keeps going up—in the next couple years, digital ad spend will hit the $500 billion mark.

The reason is simple: Everybody’s doing it.

So, to outperform your competitors, you need to keep optimizing your campaigns to make sure you’re making the most of your ad spend. And a big part of regular ad optimization is testing your ads, usually through A/B testing and ad rotation.

But you may not have tried a technique known as copy testing.

In this guide, we’ll give you the full breakdown of what copy testing is, why it’s in a resurgence, and how you can get the most out of it to increase your advertising ROI and drive sales.

Let’s get started.

What is copy testing?

Copy testing is a form of market research that determines the likelihood of an advertisement’s success through consumer feedback.

Copy testing can be a crucial component of an ad campaign’s success, as it enables advertisers to anticipate whether an ad will be compelling before they pay to promote it online. However, in the past 15 years or so, copy testing has fallen out of favor. Many advertisers see it as outdated. Their primary critiques are that copy testing takes too long, is too costly, and that it often results in bland campaigns (as research groups tend to err against originality).

But, with the introduction of automated copy testing in the past few years, copy testing is coming back. Plus, you can now use Microsoft IF functions to test different copy versions in both your Bing ads and your Google Ads.

What is automated copy testing?

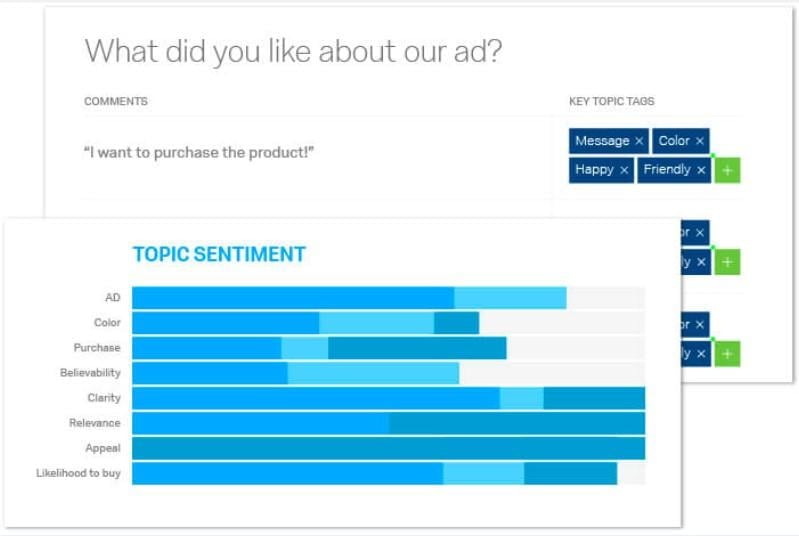

Automated copy testing is a newer model of copy testing that uses software to send variations of your business’ ads to real people who have registered to give feedback. By crowdsourcing your ad campaign’s test, you cut down on both time and cost. And, with modern analytics built in, automated copy testing tools parse your data for you.

For example, copy testing app Qualtrics compiles your market research group’s responses into easy-to-understand, actionable graphs.

To the creativity criticism, the fact of the matter is that (time and again) modern consumers reward creativity.

Take a look at this ad, for instance.

This ad is always worth a watch. Are we really concerned, then, that a modern copy testing research group won’t like creativity? This video has 56,600,000 views on YouTube (and it was a TV ad).

What about A/B testing?

The more experienced digital advertisers out there may be thinking, “I’m already A/B testing my ad campaigns and seeing fantastic results. Why would I spend money to show my ads to people who have no interest in buying from me?”

The answer is simple: Pay more now to make more later.

You’re familiar with ROI.

We’re all looking to maximize our return on investment. And A/B testing helps with that—but it’s missing one crucial component. You’re still paying for the losing variation.

Copy testing enables you to pay more upfront but make more down the line.

And even if we ignore the cost, A/B testing can be problematic. How many A/B advertising tests do you run which don’t reach statistical significance? How many do you run where the winning ad design doesn’t actually match up with the rest of your website, so your sales funnel begins to look like a patchwork quilt? How many were false positives which, once implemented, actually cost your business money in the long run?

The long and short of it is this: Only 1 in every 7 A/B tests results in a statistically-significant winner. And that’s a number taken from experienced advertisers. There are a lot more optimizers throwing A/B tests out into the ether without fully implemented, full-funnel analytic tracking.

Sure, A/B testing may give you concrete data (and there’s nothing more seductive to a digital marketer than concrete data), but it can often be untrustworthy data.

Copy testing, on the other hand, gives you both concrete quantitative data and qualitative data—insight from people like your prospective customers.

But before we get into how to get the best quantitative and qualitative data out of your copy testing, let’s take a quick look at the factors that make an ad campaign successful in the first place.

Creative and targeting: crucial factors in ad campaign success

This section might as well be called “Things You Need to Get Right in Copy Testing.”

Essentially, it’s important that before you start copy testing, you identify the factors which affect your campaign’s success or failure.

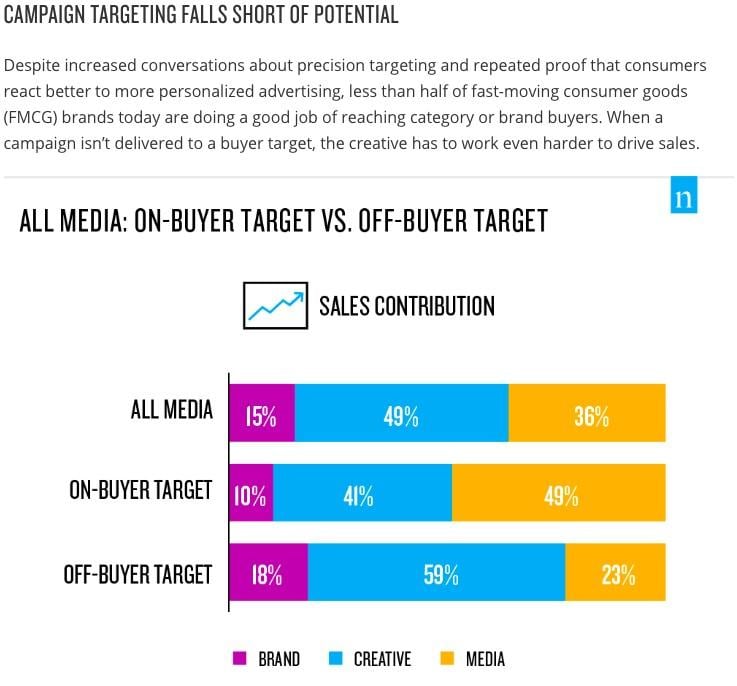

Here’s how Nielsen broke down different advertising elements by percent of sales contribution:

In short, test your creative. Unsurprisingly, your ad design is still (far and away) the most impactful variable when it comes to the success or failure of a campaign.

Then test your targeting. This is especially important in copy testing because you’re selecting a small group of people to represent your larger audience.

If you don’t get insight from the right people, that insight will be useless down the line.

Create multiple research groups to determine how each demographic grouping responds to your campaign’s creative, then (when making your ad go live), target that demographic.

If you get targeting right, you’ll be ahead of more than half your competitors.

In the same Nielsen study as above, they determined that “less than half of fast-moving consumer goods brands today are doing a good job of reaching category or brand buyers.” And “when a campaign isn’t delivered to a buyer target, the creative has to work even harder to drive sales.”

Check it out.

Demographics and context: crucial factors for successful copy testing

Like with most ad testing, copy testing comes down to demographics and context.

First and foremost, your market research group needs to be exclusively comprised of people within your target market. There’s no point in learning what 17-year-old boys think of your ad campaign targeting 65-year-old women.

That should go without saying.

What doesn’t go without saying is that the platform on which your ad campaign will be seen impacts your ad campaign’s success.

Your market research group needs to see that campaign in the same way your target market will. Imagine if you showed your ad campaign to your copy testing group on a projector screen—out of context with no desktop or mobile newsfeed around it. Do you think the insight you get would be reliable? Or would it only be reliable if your target market (one the ad campaign was live) was also viewing your ad without context on a projector screen?

Don’t think that platform or context can have that much of an effect?

Consider that the conversion rate of desktop shoppers is more than 300% better than the conversion rate of mobile shoppers.

Context is everything in advertising—there’s a reason the average cost of an advertisement on Google Ads is $2.32 and only $1.72 on Facebook, and that the conversion rate desktop shoppers is more than 300% higher than the conversion rate of mobile shoppers. How and where you see an ad impacts how likely you are to click on it.

Now that you have a better understanding of why and how to copy test your ad campaigns, let’s take a quick look at how to get the most out of it.

How to get the most out of copy testing

There are three ways that you can make sure that you’re getting the best, most impactful results from your copy testing:

- Target your copy testing group as you would your ad.

- Ask the right questions.

- Make sure the data is actionable.

Let’s go over how you can implement each of these.

Target your copy testing group as you would your ad

We touched on this above, but it’s worth repeating: Ensure your research group is, demographically, similar to your campaign’s target market.

There’s no point in creating an ad campaign that targets working women with young children, for instance, and then getting together a research group of retired men.

Remember, you’re not going to get statistically significant results with copy testing. It’s not a large group of people. Instead, it’s a small, targeted group of people giving you objective insight. And that’s a valuable thing only if those people match your ideal consumers.

For example, Qualtrics has a searchable and filter-able user base of over 10,000 prospective ad viewers for you to choose from—each with a detailed profile.

Ask the right questions

What are you looking to learn from your research group? A copy test is, historically, run with two primary elements in mind:

- Recall: How well does a prospective consumer remember the message of your ad? How well do they remember the brand, if not the message?

- Persuasion: How well does your ad persuade prospective customers to engage?

And these are excellent places to start, especially recall.

If you’re not running a click-through campaign (for instance, a brand awareness campaign on Facebook), your campaign is only effective if it’s memorable. So make sure your ad audience gives their initial thoughts and, 48 hours later, gives you insight into how well they remember the details.

Modern copy testing tools go much further than these two ideas. They enable you to ask every question under the sun—but you don’t want to do that. Remember to stay focused in your questions to get the best results.

Here are a few questions we recommend:

- Did this ad grab your eye?

- What words or phrases come to mind when you see this ad?

- What is something that might deter you from clicking/engaging?

- What do you think would happen if you clicked on this ad?

Make the data actionable

If you get qualitative feedback, like “what word or phrases come to mind when you see this ad,” can you make that actionable?

If you get quantitative feedback, like “on a scale of 1-10, how much did this ad resonate with you,” can you make that actionable?

Unlike A/B testing, copy testing requires you to interpret and translate data. It doesn’t just say, “This one is better. This one is worse.” Instead it says, “This one didn’t apply to me because you seem to think that single mothers are all exhausted, but I don’t feel that way.” And, from a conversion optimization standpoint, insights like that may be more valuable. Because you know what to do, and why you should do it.

With A/B testing results, you’re testing a hypothesis. But you’re not sure that the reason a variation wins is because your hypothesis was correct.

It could be something else entirely. It could be because the connection was faster on your variation, so people (subconsciously) trusted it more than the slow original. Or it could be because it’s September, and your audience loves September.

Get the most out of your copy testing

Now that you understand the value of copy testing your ad campaigns, you can ensure you’re getting truly valuable insight by asking the right questions of the right people in the right context.

Copy testing, more than many other digital marketing strategies, can be expensive and fruitless if done badly. But if done right, you could spend a few hundred dollars to save yourself thousands once your ad campaign goes live.