Google is serious about its ranking algorithm, updating it constantly to provide users with the best search experiences possible. This also means penalizing pages or sites that violate Google’s Webmaster Guidelines.

You can incur a Google penalty by intentionally practicing black hat SEO, inadvertently through improper site maintenance, or simply due to an algorithm update. Regardless, Google penalties negatively impact your search rankings, and in some cases, your pages or entire website could be removed from results.

So in this post, we’re going to cover what NOT to do (and what to do instead) to prevent a Google penalty and protect your site from a traffic drop. We’ll cover:

- Algorithmic vs manual Google penalties and what to expect.

- How to check for and fix a manual Google penalty.

- Seven common Google penalties and how to avoid them.

By the end, you’ll be equipped to preserve your rankings and continue growing your website traffic.

What is a Google penalty?

A Google penalty occurs when it detects that a website violates its Webmaster Guidelines. There are two different types of penalties, but both of them have the same consequence of a drop in ranking and traffic.

Algorithmic penalties

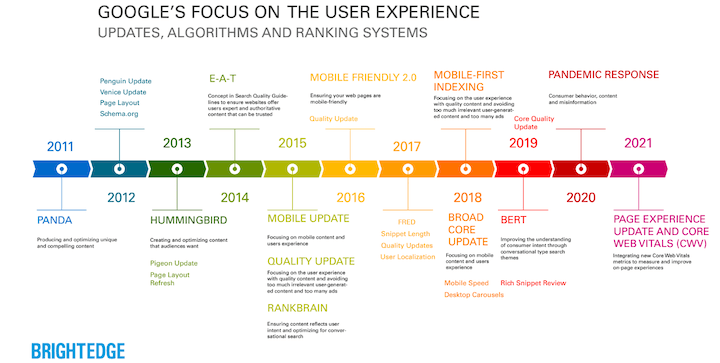

Every year, Google makes changes in its algorithm to continue serving the best results for its searchers. Some of the notable Google updates are Panda, Penguin, Pigeon, and Hummingbird.

Some algorithm updates are designed to lower the rank of guideline-violating pages, like Panda (keyword stuffing, grammatical errors, and low-quality content) and Penguin (black hat linking tactics) while others are designed to favor pages with newly prioritized ranking factors, like Pigeon (solid local signals) and Hummingbird (mobile responsiveness).

Speaking of which, do you know about the page experience and mobile-first indexing updates?

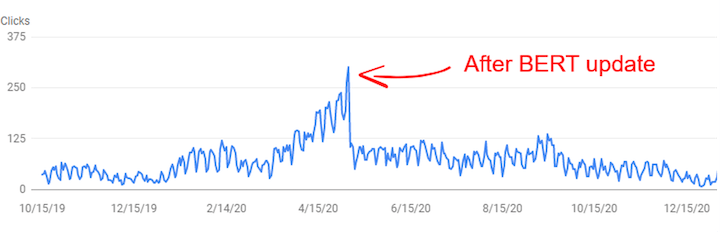

After an algorithm update, a website may notice a drop in ranking, either because it violated guidelines or because other sites better aligned with certain ranking factors. Here’s an example of a site’s traffic data after the BERT update.

How to fix an algorithmic Google penalty

Algorithmic penalties aren’t explicitly stated anywhere so you can’t check for them. The best thing you can do is see if your drop in traffic aligns with the release of an algorithm update, and learn as much as you can about it so you can identify any adjustments you need to make moving forward, or any fixes you need to make in existing content.

Depending on the update and the extent to which your website misaligned with it, your fixes may or may not lead to a restoration of ranking and traffic.

While algorithmic updates happen frequently, they all serve to reward sites for EAT and optimal technical performance, so those should be your constant points of focus

Manual penalties

Manual penalties are given by actual Google employees for pages with potentially inadvertent issues like content quality and security, or for deliberately manipulating Google’s algorithm using black hat SEO. Unlike algorithm penalties, manual penalties are easy to identify and fix.

How to fix a manual Google penalty

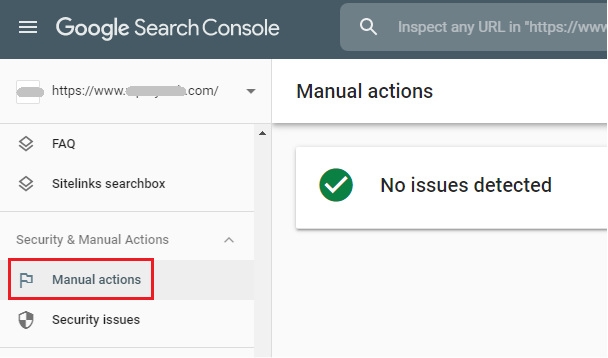

There are several Google penalty checker tools available, but you can also just use Google Search Console.

Locate Security & Manual Actions tab in your dashboard and click on Manual actions.

There you can see which policy you have violated and which pages have been affected, and how to fix it.

Once fixed, you can submit it for review. Employees at Google will review and (if properly fixed) approve the request and reindex your page.

What are the consequences of a Google penalty?

The result of any penalty is a drop in rank, but the severity of the drop depends on the type of penalty issued.

- Keyword-level penalties: Ranking will drop for a particular keyword.

- URL or directory-level penalties: Ranking will drop for a particular URL.

- Domain-wide or sitewide penalties: Ranking will drop for several URLS and keywords across your site.

- Delisting or de-indexing: This is the highest level of penalty imposed by Google, where they remove your domain from the Google index. As a result, none of your website’s content will be shown on Google.

How long do Google penalties last?

Google penalties last until you fix them. If a certain period of time elapses where a penalty goes unfixed, the alert will disappear from your Search Console but the penalty’s consequences will remain in effect. In other words, you’ve lost your chance to rectify things with Google.

But once the penalty is lifted, your site may or may not recover its traffic and rankings. For more information, check out this post on Google penalty recovery timelines.

The top 7 reasons for Google penalties and how to prevent them

Every business strives to get on the first page of Google so they can increase traffic to their site and ultimately earn more customers.

The way to achieve that (through SEO) is a lengthy process that requires effort and patience. This is why many—especially those who have just started a blog or website—are tempted to use shortcuts to improve their rank. But these tactics only backfire in the form of Google penalties.

Here you will find the seven most common penalties and what you can do to prevent and/or fix them.

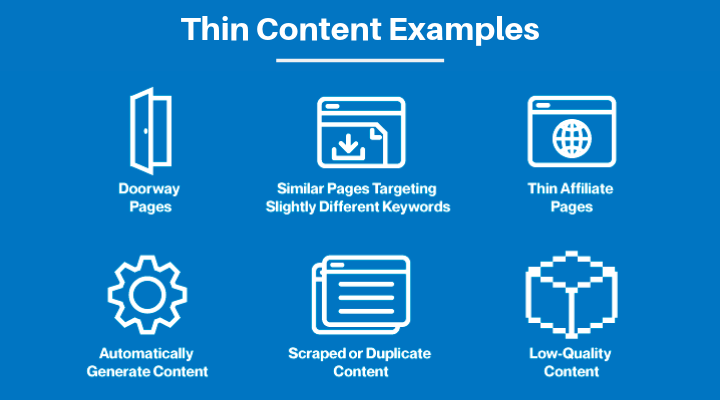

1. Thin content and doorway pages

This is when website owners focus on quantity over quality SEO content, thinking more content means more traffic. They may use content generating tools, publish short-form articles, or scrape content from other sources.

Not only are these bad SEO practices that Google can detect, but low-quality content also reflects poorly on your business.

The goal of the 2016 Panda 4.0 algorithm update was to reduce the visibility of low-quality content and doorway pages in search results. This is why eBay lost 80% of its organic rankings!

How to prevent the thin content penalty:

- Don’t fully outsource or attempt to mass-produce your content. Mass-produced content is never quality, and outsourcing can lead to content that is off-brand and incohesive.

- If you need help scaling your quality content, hire freelancers you can work closely with and who specialize in your industry to produce pages that bring value to your readers.

- Do proper keyword research to make sure you identify the right keywords to target and that your content matches the intent of the query.

- Create pillar pages or cornerstone content instead of doorway pages.

- Combine short pages optimized for similar keywords into one longer page that contains more information on one keyword.

2. Hidden text and links

Hiding any text or links for the purpose of SEO rather than users goes against Google’s Webmaster guidelines. Text and links can be hidden in several ways, such as by:

- Setting the font size to 0

- Using white text or linking in the background

- Hiding a text behind an image

- Using CSS to place text out off-screen

- Making links the same color as the background

How to prevent the hidden content penalty

For starters, never do it intentionally. If you have to hide something, it probably shouldn’t be on your page.

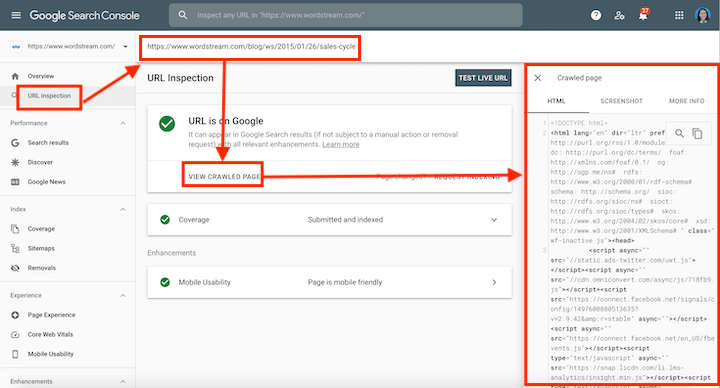

If you didn’t intentionally do this, Go to the URL inspection tab of your Search Console, enter the affected pages into the search box, and then “view crawled page.” There you can check for any hidden links or CSS.

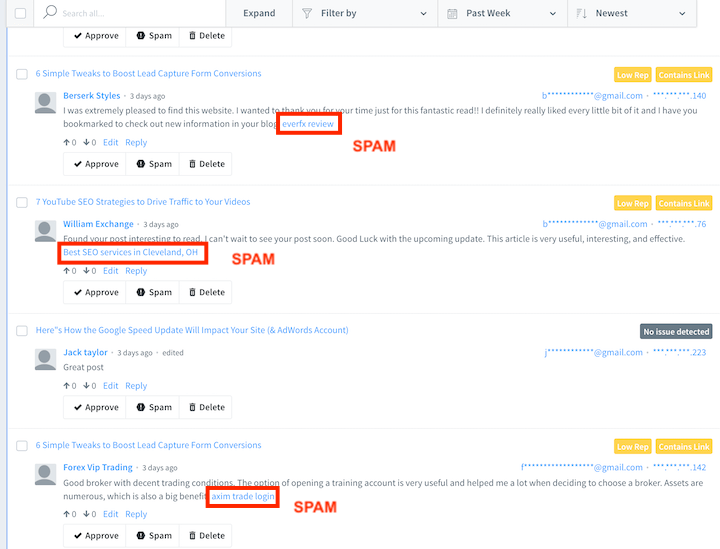

3. User-generated spam

If you run a forum, allow guest posts, or have comments enabled on your blog, it could be overwhelmed by spam bots or bad actors. Spam links may link out to poor quality or inappropriate pages, which compromises the T in EAT.

Or, actual humans will make a comment on your blog with one or more irrelevant links just for the purpose of getting a backlink from your site to increase their domain authority.

How to prevent the user-generated spam penalty

Here are a couple of ways you can prevent user-generated spam on your website and forum.

Comment moderation tools

We use Disqus to filter, delete, and ban spammy comments. You can also review approved comments before they go public on your site. If you aren’t able to keep up with moderation, even with a plugin or tool, then disable commenting altogether

Anti-spam tools

Spammers use automatic scripts to flood your comment section. Integrate Google reCAPTCHA with your site to prevent comment spam.

Nofollow and UGC attributes

If a guest poster or commentor posts an appropriate link but one that you don’t want to be associated with, you can add tags to make them no-follow links. This will prevent Google from following those links off of your page and passing link juice on from your site to the linked site.

These include the rel=”nofollow” and rel=”ugc” attributes. For example:

Original link: <a href=”http://www.website.com/”>My Website</a>

No-follow version: <a href=”http://www.website.com/” rel=”nofollow”>My Website</a>

UGC version: <a href=”http://www.website.com/” rel=”ugc”>My Website</a>

Noindex meta tag

If you allow users to post articles on your site, you can add a noindex meta tag to those pages. This way the page will be accessible through your website but won’t be visible in search results or considered in Google’s ranking algorithm.

Add <meta name=”robots” content=”noindex”> after the <head> tag

4. Unnatural or poor links to your site

Google’s 2016 Penguin algorithm update was designed to detect unnatural link building.

Backlink building is a highly effective SEO strategy that helps increase your page authority—but only if they come organically from high-quality websites.

How to prevent the unnatural link penalty

Of course, use an appropriate link-building strategy that does NOT include:

- Buying or selling links

- Link exchanges (Link my website and I will link to you)

- Forum profile / Signature links

- Blog comment links

- Article directory links

- Building too many links in a short span

- PBN links

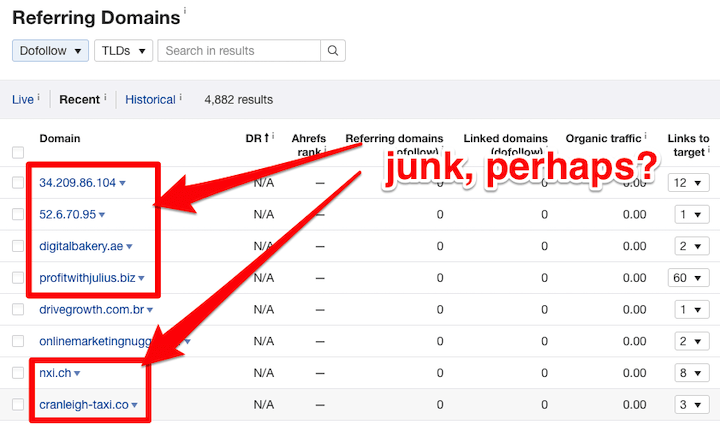

Perform regular backlink audits

You can also get spammy links to your website unintentionally. Use Google Analytics, Search Console, or an SEO tool like SEMrush or ahrefs to analyze your backlink profile and disavow toxic links.

Here’s an example of just one part of a backlink profile from an ahrefs backlinking report.

5. Keyword stuffing

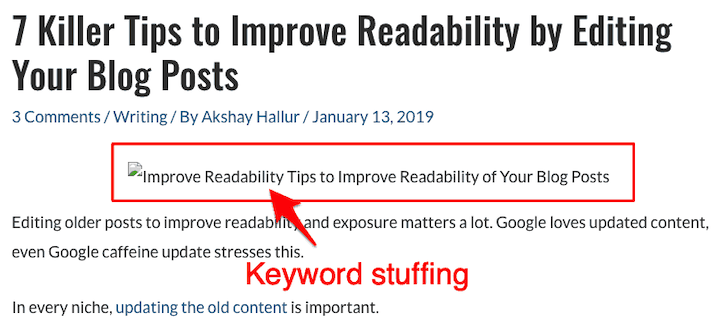

On-page SEO, like adding keywords to the title, headings, body, meta description, and alt text helps Googlebot to understand what your page is about. However, intentionally keyword stuffing is a black hat SEO tactic that incurs a Google penalty.

By now, you know what keyword stuffing in body looks like, but you can also be penalized by keyword stuffing in alt text

How to prevent the keyword stuffing penalty

- Incorporate keywords naturally in your content, as you would explain something in person.

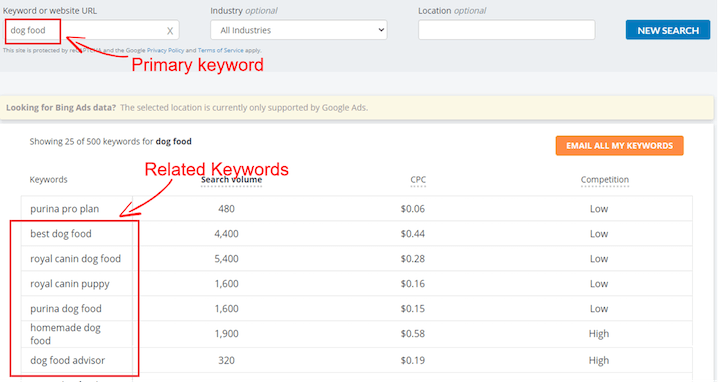

- Instead of focusing only on one keyword, use long-tail or LSI keywords. You can use keyword research tools for help.

WordStream’s Free Keyword Tool

6. Hacked website

If hackers gain access to your website, not only can they compromise confidentiality, but they can also inject malicious code, add irrelevant content, or redirect your site to harmful or spammy pages.

Your site will see severe ranking drops on all search queries, and Google may delist your whole website from search results for this penalty.

How to prevent the hacked website penalty

There are several ways you can tighten your website’s security:

- Keep your content management system updated

- Use strong passwords and change regularly

- Implement SSL certificate

- Invest in quality hosting

- Use a malware scanner tool to detect hacks

- Backup your website regularly

- Hide login URL and limit login attempts to stop brute force attack

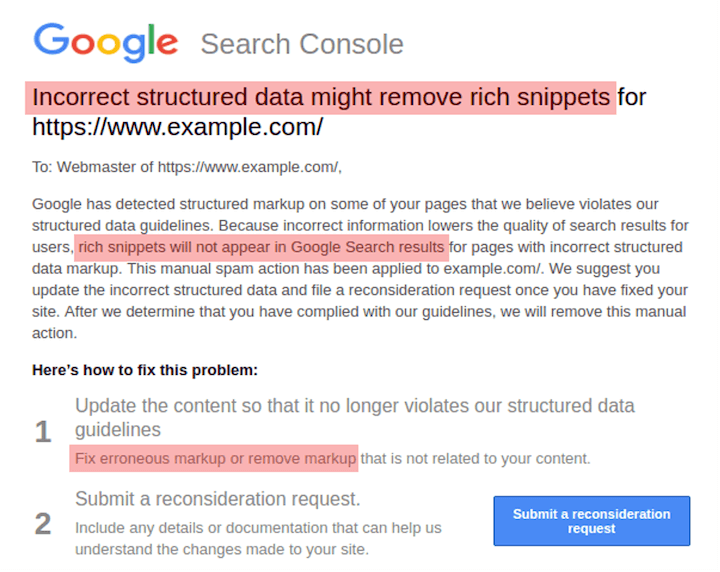

7. Abusing structured data markup

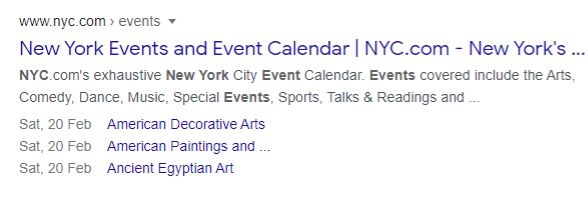

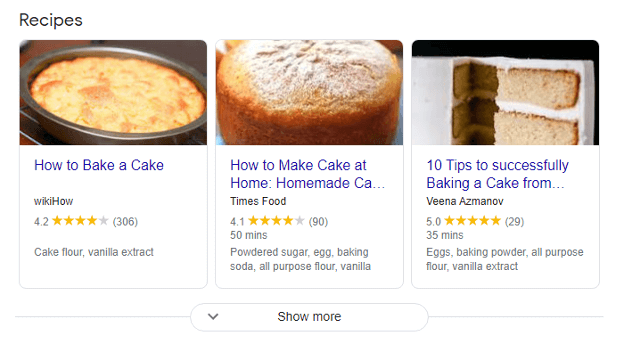

The structured data markup is a type of code that helps Google to display your site more attractively in Google search results, such as by showing star ratings and quantity of reviews.

For example, here is event schema markup:

…and here is recipe schema markup:

However, if Google detects you are using structured data that is irrelevant to the content and users, you may receive a manual penalty.

How to prevent the structured data penalty

This is another penalty that deals with black hat SEO. Here’s what to do:

- Don’t add fake reviews to increase CTR. Follow these appropriate ways of getting real Google reviews.

- Only use structured data that makes sense for the content you are marking up.

- Make sure your mark-up content is visible to readers.

- Don’t add any schema markup that relates to illegal activities, violence, or any prohibited content.

Stay on top of Google penalties to avoid drops in ranking

Whether it’s algorithmic or manual, Google penalties hurt your ranking and traffic. You can implement fixes to remove the penalty, but you may or may not recover your traffic and ranking. That’s why it’s so important to do what you can to prevent them in the first place. In this post we covered seven Google penalties you can prevent:

- User-generated spam

- Thin content

- Keyword stuffing

- Hidden text

- Unnatural links to your site

- Hacked website

- Structured data abuse

By ceasing any black hat SEO practices, implementing security and moderation tools on your site, and focusing on truly quality content, you can avoid Google penalties, improve your ranking and traffic, and protect your site from hackers.

About the author

Jyoti Ray is the founder of WPMyWeb.com, where he writes about blogging, WordPress tutorials, hosting, affiliate marketing, and more.